Turning Feelings into Data: Applying Natural Language Processing to Employee Sentiment

Employees give feedback and comments to express how they're feeling. Can vendors specializing in natural language processing help organizations scale their ability to understand this data?

Nowadays, there is little question that deeply understanding and addressing issues of employee engagement is crucial to driving business profit and performance. [1] [2] Unfortunately for companies tackling engagement issues, processing the requisite data poses a serious challenge. While surveys still remain as the most effective method of measuring employee engagement [3], the structure of the data collected poses a challenge, namely in that it is typically unstructured text.

Survey comments are a treasure trove of data to a people analytics team, providing the depth and color that leaders need to not only flag that a particular engagement measure is low, but more importantly understand why that measure is low. Unfortunately for these practitioners, these (often lengthy) comments are generated in extremely high volumes and are messy with grammar errors, spelling mistakes, and colloquialisms. Only a few companies have the resources to devote the tens (if not hundreds) of hours required to manually read and sort these comments into quantifiable buckets. For those lacking that luxury, turning this feedback into a utilizable dataset proves to be an insurmountable challenge. [4]

This need gap is where companies like Ultimate Perception enter. Perception, formerly known as Kanjoya, pairs an employee survey and performance feedback collection platform with a proprietary natural language processing (NLP) algorithm that makes the process of turning massive amounts of unstructured text into quantifiable insights almost instantaneous. Their machine learning technology focuses on two tasks:

- First, its models analyze each employee-submitted comment and assign a probability of how likely that comment is discussing one of over 70 specific themes (e.g., work-life balance, senior leadership, communication skills, etc.)

- Second, the models also determine the probability each of 100 potential sentiments is expressed in the writer’s tone (e.g., confusion, excitement, frustration, etc.). [5]

This coupling of two major fields of NLP – theme clustering and sentiment analysis – is where Perception’s machine learning technology shines, specifying which themes employees are unhappy about, and which specific percentages and subgroups of employees are unhappy. Not only does this process save time and money: it also reduces risk of an analyst introducing bias in the way they categorize and interpret comments. [6]

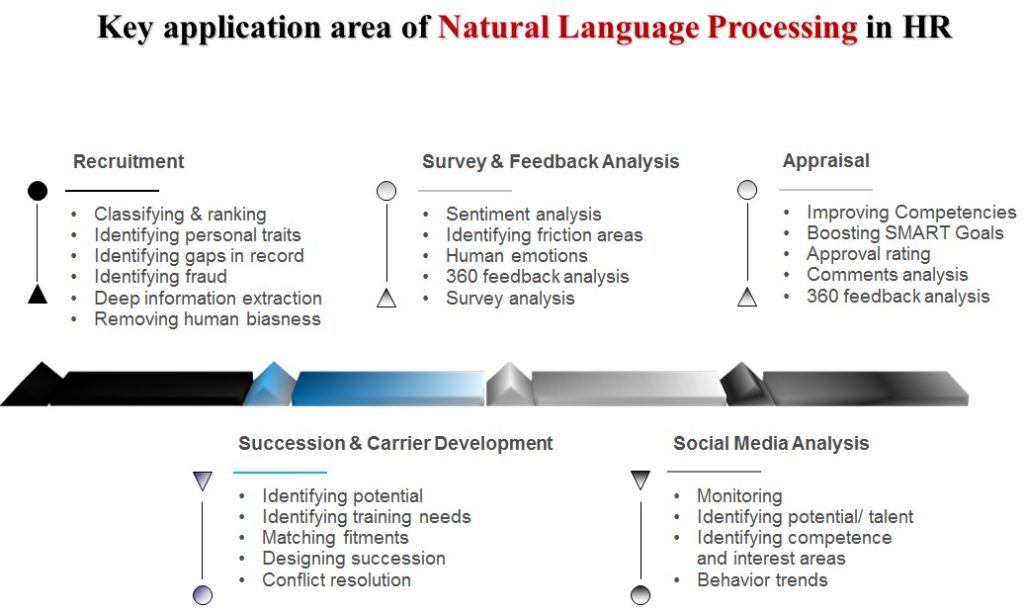

Long term, Perception is seeking to build out its NLP software to extend into the myriad of other potential applications across the HR and employee lifecycle (see Exhibit 1). [7] The company already applies its algorithms to analyzing performance reviews, a process they claim can reduce biases of language impacting promotions, especially when combined with feedback recipients’ demographic data like gender and ethnicity. [8] In fact, this combining of NLP with other datasets or even other algorithms holds great potential for Kanjoya, as the company could combine both quantitative and qualitative survey data to develop robust sentiment predictions, or even craft custom individually-tailored engagement action plans for leaders based on the analysis [9]. The technology need not only be applied to volunteered comments; technically, sentiments and themes could be drawn from passively-collected employee-generated text as well (e.g., emails and messenger chats) [10].

Exhibit 1: Potential NLP applications in HR

With such potential, it’s no wonder Kanjoya was acquired by long-standing HR industry stalwart Ultimate Software, with similar attention devoted to its competitors by the likes of venture capitalists [10] and LinkedIn [11]. So should companies go all-in on investing in an HR NLP vendor? Not quite: unlike other applications of NLP technology such as customer sentiment analysis or text data mining, employee-generated data provides its own unique challenges.

To conclude, I pose three potential challenges to consider:

- Organizational culture-specific vernacular: Consider cases in which a phrase specific to that company’s culture (e.g., Salesforce’s discussion of “Ohana”) is used pervasively in comments. How can Perception’s technology be developed in a scalable way across companies to tag those term clusters and sentiments accurately?

- Tops-down ontology vs. bottoms-up clustering: Related to the above, Perception’s theme clustering is only as accurate as the training data it’s based off of, i.e, historical text data sets and data from other companies. What happens if a new theme trend emerges that isn’t currently captured by the Perception out-of-the-box ontology? Can this issue be addressed given machine learning algorithms for bottoms-up clustering (i.e., self-generated custom clusters) has not caught up yet?

- Levels of accuracy: While Perception claims accuracy can top 95% for some companies, it acknowledges actual levels may be much lower. [13] For users of the product, though, how can they make an informed decision of how to use the product without knowing how “much lower” accuracy rates are? How can they check this accuracy without reverting to their old comment-coding methods? And even if accuracy is at 95%, how does a leader handle potentially explaining to the other 1 out of 20 employees that their comments may have been mistagged, and thus ignored?

(799 words)

__

[1] Naz Beheshti, “Our Approach to Employee Engagement is Not Working,” Forbes, Sep 30, 2018, https://www.forbes.com/sites/nazbeheshti/2018/09/30/our-approach-to-employee-engagement-is-not-working/#2416e7517274, accessed November 2018.

[2] Jacob Morgan, “Why the Millions We Spend on Employee Engagement Buy Us So Little,” Harvard Business Review, Mar 10, 2017, https://hbr.org/2017/03/why-the-millions-we-spend-on-employee-engagement-buy-us-so-little, accessed November 2018.

[3] Scott Judd, O’Rourke, and Grant, “Employee Surveys Are Still One of the Best Ways to Measure Engagement,” Harvard Business Review, Mar 14, 2018. https://hbr.org/2018/03/employee-surveys-are-still-one-of-the-best-ways-to-measure-engagement, accessed November 2018

[4] Luminoso, “Employee Feedback and Artificial Intelligence: A guide to using AI to understand employee engagement” (PDF file), downloaded from Luminoso website, https://luminoso.com/writable/files/White-Paper-Employee-Feedback-and-AI.pdf, accessed November 2018.

[5] Adam Rogers. “How Unified Employee-Feedback Tools are Revolutionizing HR”. Ultimate Software’s Blog, Feb 7, 2017. https://www.ultimatesoftware.com/blog/employee-feedback-perception/, accessed November 2018.

[6] Dan Ring. “Machine learning drives Kanjoya performance review software”. Tech Target, Apr 2016. https://searchhrsoftware.techtarget.com/feature/Machine-learning-drives-Kanjoya-performance-review-software, accessed November 2018.

[7] Raja Sengupta, “How Natural Language Processing can Revolutionize Human Resources”. AIHR Blog & Academy. https://www.analyticsinhr.com/blog/natural-language-processing-revolutionize-human-resources/, accessed November 2018.

[8] Cyrus Sanati, “How big data can take the pain out of performance reviews,” Fortune, Oct 9, 2015, http://fortune.com/2015/10/09/big-data-performance-review/, accessed November 2018.

[9] Dave Zielinski, “Artificial Intelligence and Employee Feedback”, Society for Human Resource Management, May 15 2017, https://www.shrm.org/resourcesandtools/hr-topics/technology/pages/-artificial-intelligence-and-employee-feedback.aspx, accessed November 2018.

[10] Frank Partnoy, “What Your Boss Could Learn by Reading the Whole Company’s Emails”. The Atlantic, Sep 2018, https://www.theatlantic.com/magazine/archive/2018/09/the-secrets-in-your-inbox/565745/, accessed November 2018.

[11] Michael Rochelle and Friedman, “LinkedIn Acquires Glint, Bolstering Its Position as an HCM Market-Maker”, Human Resources Today, Oct 15, 2018. http://www.humanresourcestoday.com/data/glint/survey/?open-article-id=9072138&article-title=linkedin-and-glint—potential-hcm-technology-powerhouse-in-the-making, accessed November 2018.

[12] Seth Grimes, “Where are the text analytics unicorns?” VentureBeat, May 3, 2015. https://venturebeat.com/2015/05/03/where-are-the-text-analytics-unicorns/, accessed November 2018.

[13] Sanati, “How big data can take the pain out of performance reviews”.

Great article about machine learning and its applicability in an HR setting. The author has done a fantastic job of explaining the two key themes (sentiment + theme clustering) and the value of such a system. This does also remind me of the Aspiring Minds case, where a software was built to connect job applicants to companies recruiting for talent.

The challenges that the author mentioned would probably have to be handled on a case by case basis. Each organization will have its own vernacular and jargon, and HR will have to work on getting a tailor made version of the product. Also there will be some errors, the hope is that the algorithm gets better with time and accuracy can increase over 95%.

For the machine learning part – I would have liked to see some more rigor and discussion around the three main components we learnt about (clustering, regression and classification). There could have also been an argument about the different types of learning (supervised, unsupervised etc)

Overall though an enjoyable read. I learnt a lot about machine learning and its potential applicability in an HR setting.

I think it’d be interesting for Perception to also think about doing predictive analytics and providing recommendations or action plans that HR professionals could use as a jumping off point to either improve or maintain employee sentiments. Quite a bit of learning would be required, of course, but the potential outcome is very strong. Thinking back to my corporate job, I know one challenged we faced with surveys was that our action plans post-survey were always short-sided and never really substantial. It seems like a objective third-party recommendation would be helpful in steering us the right way as opposed to forcing the employees to come up with solutions that would improve their experience. The challenge we saw there was that it was hard for junior level employees to be total forth coming, so a database that can translate and synthesize natural language would be helpful to ensure everyone is heard.

This was a thought-provoking read and highlighted some of the challenges HR teams face today. It reminded me of taking pulse surveys at my previous company, and being shown the results – although as sashafierce mentioned, one of the key issues is that even when such sentiments are aggregrated, creating action items and actually implementing any changes seems to be a missing step for many organizations. I see the value of what Kanjoya is doing mostly from a time-saving perspective. I wonder if some of their analysis could include things like benchmarks and sample action plans from comparable companies.

In response to the challenge on organization-specific vernacular, I think this is a case where implementation could vary on a per-company basis. Ie: if I was in People Ops at Salesforce I might work with an account manager to ensure that “Ohana” was being appropriately captured by the system; if I was at Google, “Googly” might be an area of focus for me. The initial output might be something as rudimentary as a word cloud for my org, but over time I’d expected those special terms to become more embedded into the system and thus more meaningful. It probably wouldn’t make sense to introduce it into Kanjoya’s ecosystem as a whole, but I imagine there’s a way to keep the central database separate while having meaningful personalization for larger clients.

Thank you for writing such a thought-provoking piece. Using ML to analyses employee feedback was a surprising use of the technology.

I think that the most controversial application is the possibility to scan the employee emails to look for early signals of employee discontent to implement preventive retention measures. However, this practice may fall into a grey area where employee privacy may be sacrificed at the expense of enhanced company surveillance.

This last implication may raise an additional objection to the three challenges that you highlighted towards the end of the article: what boundaries should ML technology implement when analyzing passively collected (potentially unauthorized) employee generated texts such as emails and messenger chats to protect employee privacy?

Great article! In my mind, questions 1 and 3 have a similar response – we cannot rely on machine learning alone. There is need for human intervention; and an appropriate mix of human and artificial intelligence is probably what’s most effective. What that means for question 1 is: at the risk of oversimplifying, we can input a list of distinct key words that each organization uses for the same concept in order to customize it for different organizations. As for question 3 around accuracy concerns, it is critical to compare and contrast what the data reveals with human intuition/perception and avoid over-indexing on specific data points. While this is in direct contradiction to the concept of using technology to gauge feelings, simple things like talking to people in the hallway/cafeteria can reveal interesting insights that may/may not have been captured in the data. Of course, this approach isn’t scalable, but may be a good way to sense-check or confirm what the data is saying, particularly before making radical changes based on that data.

This is a really interesting application of NLP and it’s great to see these types of models applied to People Analytics! I think the concerns you highlighted above make a lot of sense and are common in this space. To the first concern, one approach might be to have clients manually tag previous submissions with sentiments/concerns and use that data for client-specific model training. To the second concern, perhaps Perception could identify submissions that don’t closely match any predetermined concern or sentiment and have them manually reviewed by the client?

I would also propose one additional concern: responses that contain multiple themes or sentiments. I would be interested to learn more about what happens in cases where employees regularly write about one primary theme and another secondary theme. Is Perception able to identify the trend that the second theme is mentioned frequently across posts even if it is never a primary submission topic?