Linux won the servers, but dead on desktop

Linux is present in very different markets where it has had vastly different results. What explains those differences?

Linux’s history in the platforms that it is active provides insights into what influences winning and losing in an environment driven by network effects.

Linux has had extraordinary results with servers, but its desktop systems have been a complete failing It was also quite successful in mobile as the basis of the Android OS. Indirect and direct network effects largely explain those differences in adoption across its products.

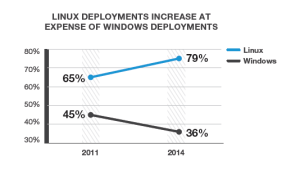

Results of a Linux foundation 2014 survey on usage in the enterprise sector perfectly illustrate the winner-takes-all pattern often caused by network effects. As more Linux platforms are deployed to develop enterprise applications, the platform grows and becomes more attractive for new users (direct network effects). The growing number of platforms in Linux make it a more attractive choice for developers and through indirect network effects in turn leads to more users.

The value proposition seems very clear: “make it good, and make it free”. In the context of a product aimed at a somewhat more refined customer – an enterprise deploying a complex technical solution, it is clear why such a value proposition could lead to success. But while beating Windows under those conditions appears intuitive, why was Linux able to beat other Unix-based system that were being developed in the nineties? The answer isn’t clear, but certainly that question is being discussed within the tech world (see e.g. this blog post on why Linux beat other open-source solutions) . It seems like beyond network effects, it’s about finding just the right product that will talk to users, requiring a good dose of skill and luck – and from there leverage network effects to become the dominant player. In the server utilization, I do not expect major changes in the near future. Mainly, I believe that version compatibility constitutes a high barrier to switching, and the technical / functional nature of the application reduces the incentive to switch as long as it works, I see this market as much less fickle than many other modern platforms targeted at the end user.

If Linux is such a good product and free, how can we explain it having <1% of the market share in the desktop world? To me, again, the product itself is the number 1 cause, and network effects have amplified market reactions to make it one of the biggest losers in that world. The main issue is: Linux could be user-friendly, but really, it just isn’t. Linux just never got the necessary momentum to start growing fast from a small user base. No matter that it is free then, a product that doesn’t meet users needs will always struggle to take off. The fact that few hardware today is built for Linux further disincentivizes users to buy, and complementors along the chain – hardware manufacturers, retailers to make it easy to buy a Linux-equipped laptop. There have been a few attempts from enthusiastic customers to broaden usage of Linux as a desktop operating system. Most notable perhaps is the city of Munich’s decision to switch all official city computers to the Linux operating system. Munich’s experience though makes it clear just how strong network effects are at preventing any possible growth, and indeed perhaps forcing users of the minority platform to switch back to the majority platform, i.e. Windows. The major point of course is compatibility – when platforms are made proprietary, cross-platform collaboration can become so complex that the incentive to switch back becomes very strong. After a re-evaluation of their choice last year, Munich is staying on Linux, for now. Meanwhile, in the rest of the world, Linux for desktop has been dead for a while, and the comeback won’t be anytime soon.

fully agree on user-friendly point. i get the urge to install ubuntu every so often, and almost invariably go back to windows after a few days because of some ridiculous bug or glitch that requires super-involved effort to fix. the terminal will always be the domain of the power user, and until a distro buries the terminal like Windows did with Powershell/cmd, the masses will prefer windows.

And, today, in 2016 it’s looking ever worse. Linux desktop has always been described as hard to use. The techies (of which I’m one) simply couldn’t hear it. Config files, /etc files, ~/.config/something, /whatever/wherever, every app, every function was a editor and file placement configuration issue that could only be resolved via Google Search. No amount of intuition, no amount of directed guessing, could get an end-user into a comfortable environment. Pretty much everything was a one-off. Distributions tried, but never seemed to be able to bring the “whole package” together.

Now, as things are getting somewhat better on the configuration side, we are starting to face simply Massive fragmentation and functionality removal. Why use X11? You can have both X11 and Wayland layers of vastly inconsistent and much more incomplete functionality, since, well, whatever you’re doing was an “edge-case” or is, well, is simply really, really, hard? Too hard for us to figure out. But, we surely know X11 has to go away. Yep, for sure. Why have any sort of consistent look, feel, or “guessable” functional access when you can start by figuring out how to install a dozen “themes” just to get a sorta, kinda, common color appearance between apps. Oh, what should I poke at to actually do something? Well, that’s another question altogether.

I’m not ranting on the X11 vs. Wayland thing. It’s just a great example for just about everything these days.