WHY YELP ISN’T RELEVANT + WHAT CAN BE DONE

No flies or fictional characters were harmed in the making of this post. All names of cast and crew have been disguised, but our story reflects real events that occurred on October 31st.

Act 1 – An Inciting Incident

A lucky fly on the wall of a Mini Cooper recently traveled back with 4 passengers from State Bird Provisions, a Michelin starred restaurant in San Francisco. The foursome had spent the evening there celebrating Kissy Suzuki’s 56th birthday. Flymaster Fly™ reported back on the conversation –

Kissy – The dim sum there was great – I loved it! The crowd was fun too!

Dr. No – What a hellhole. I’d sooner call it ‘Bird Flu’. Thank goodness all of you ate my dishes. I’m glad you all enjoyed it, but I’m having some kabab as soon as I get home.

Cash-Strapped Khashoggi (CSK) – It was alright. It doesn’t stand up to the places I used to frequent with my crew in Hong Kong, but I suppose it was fine. Nothing amazing, though.

Sonja Petrovskaya – Ambiance was fun, but the servers were too familiar. I’d have to agree with CSK – food was just alright. Dim sum dumbed down. Fun place overall though.

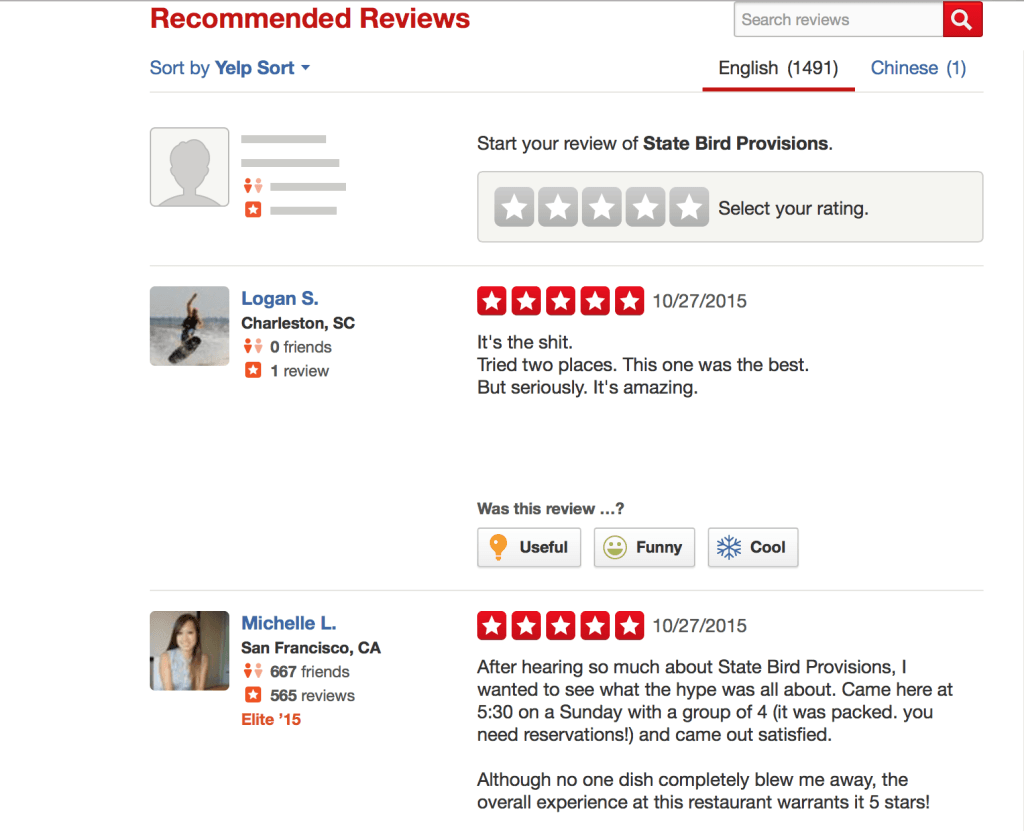

What’s wrong with this picture? This crowd of four comes from the same socio-economic background (yes, even CSK at this point), typically shares similar tastes, and this place has the Michelin stamp of approval, after all. If they all posted reviews to Yelp, who should I believe? Worse yet, who do I trust when it’s not just a crowd of four, but a crowd of 1,490 and counting, and I don’t even know anything about those people?

Act II – The Conflict

This brings us to the problem of relevance on Yelp, or the lack thereof, when it comes to reviews of experiences that are highly subjective, such as restaurants.

The fact of the matter is, Yelp provides no dimension to capture this when reviews are submitted. As a consumer of reviews, I’m therefore left to blindly guess the relevance of a given review, or even the relevance of the average star rating from1,500 people vis-à-vis my own taste. The point people make that about the “law of large numbers” – that an average over so many people should be indicative – doesn’t hold, because I don’t know who that group is, or what standard they represent. “What is their average an average of?”

To summarize, these are the reasons why a Yelp restaurant review fails to capture and communicate relevance, and also why their level of accuracy can’t be trusted:

- (1) No measure of the reviewer’s taste(s) relative to my own (ie I don’t know who these people are + only 1% of Yelpers post reviews – there is already some sort of inherent bias in who falls under the character of “the Yelp reviewer”)

- (2) No measure of context of the reviewer’s visit to the restaurant

- (3) No segmentation of review criteria (ie. Cuisine, setting, service, etc are not broken out. A lump sum star score average doesn’t point to which of these factors may have influenced the rating more or less)

- (4) Social influence bias (“Research at MIT suggests [online reviews] are systematically biased. When it comes to online ratings, our herd instincts combine with our susceptibility to positive “social influence.” When we see other people have enjoyed a hotel or restaurant — and rewarded them with a high online rating — this can cause us to feel the same positive feelings and to likewise provide a similarly high rating.” On Yelp: 21.9% are 3.5 stars, 61.9% are 4 stars, and 16.2% are 4.5 stars – also demonstrating a herding effect to “middle” ratings)

- (5) Fraudulent reviews

- (6) Yelp filters reviews, but doesn’t do the best job filtering

In Act III, we focus on potential solutions for those 3 first points impacting “relevance” of reviews.

Act III – Dénouement

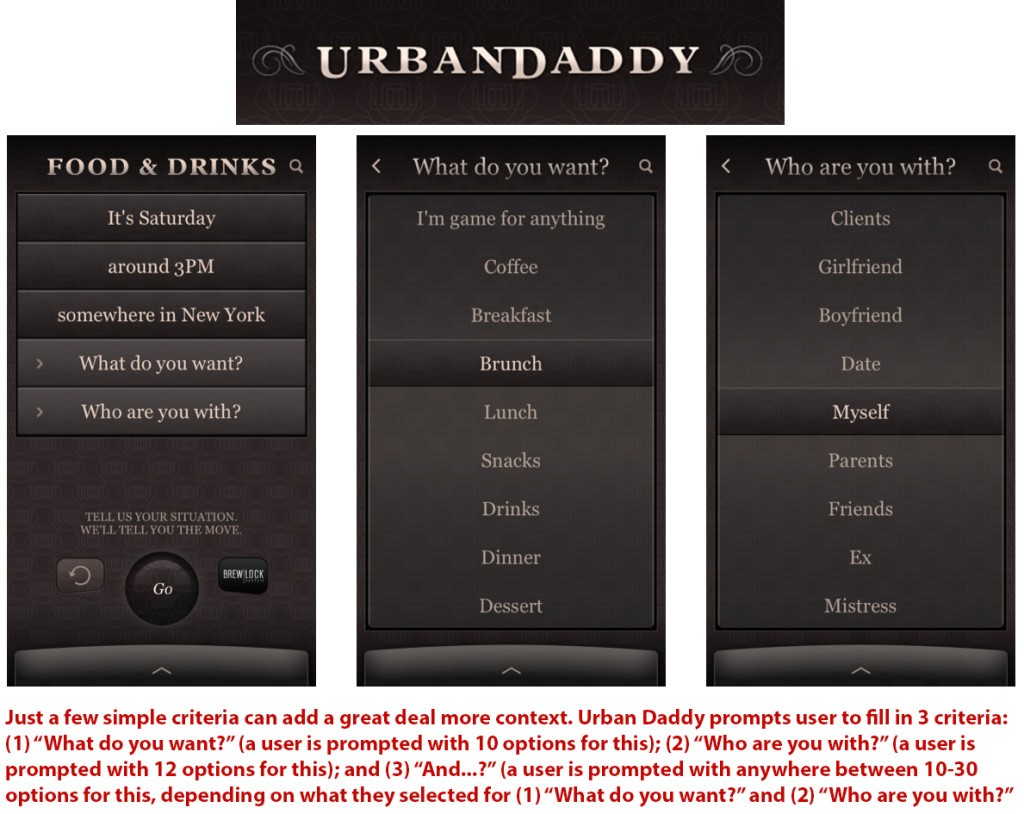

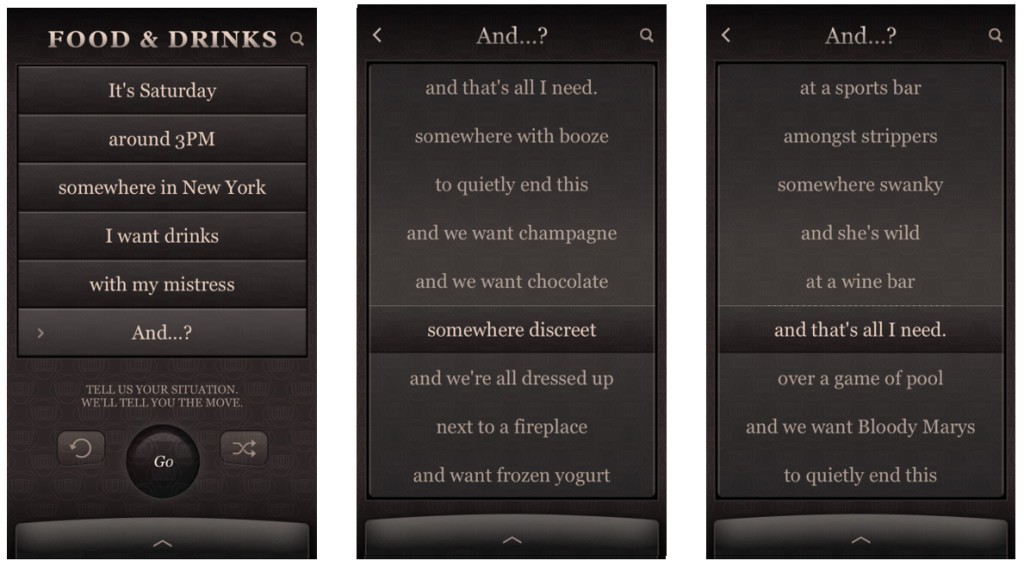

For possible solutions to the problem of relevance, we can tear a page out of “Urban Daddy’s” playbook – yes, you read correctly, “Urban Daddy”.

If Yelp were to incorporate some simple categories of criteria such as these when reviews are posted, this would allow users to then look up a type of review with a higher level of granularity. I, as a consumer, could then have these categories to filter down to reviews more relevant to my particular context and taste(s). Additionally, having a given review broken out into simple categories of “Cuisine”, “Ambiance”, “Noise level”, etc, “Overall” would further enhance relevance for readers.

This type of solution could also help increase relevance of other types of more subjective experiences/products that are reviewed – hotels, tours, etc…

WORD COUNT: 742

People go to Yelp to see if the food at a restaurant is good. Then users go through photos and reviews to discover more about the ambiance and service of the restaurant.

Yelp has captured the crowds, but it is failing at structuring the data. Reviews can focus on any aspect of a restaurant experience, but users have to look for reviews that talk about what matters to them. Yelp can definitely step up its data collection and can even do some analysis of this data to make users life easier when finding restaurants or any other business.

I agree with you in that categories would create much value for Yelp’s users. And the solution is as simple as changing the format of user reviews.

Interesting post – I also had an experience of places that got really good reviews and I was underwhelmed when I actually went. Yes, there is a “standards” problem, but I’m not sure how Urbandaddy solves this problem. First of all, who is behind Urbandaddy’s reviews? This looks like curated content, which is arguably more prone to “biased” reviews. If the argument is to add Urbandaddy’s functionality to Yelp, we are still stuck with the same crowd providing reviews, and I don’t see how Yelp would be able to segment the users by tiers of “high/medium/low standards”. We may just have to live with the fact that the average on Yelp is an average of average tastes that are supposed to please the majority of people, not necessarily the 1% 🙂 That being said, there can def be another app to cater to the people who have high standards and a fine palate, Yelp just was never meant to be that.

Just to clarify – this post has nothing to do with catering to finer tastes, but with the problem of anyone being able to understand what the “standards” of another reviewer are. Yelp cannot truly continue to satisfy the majority of people, because of reviews’ lack of relevance to the individual reading them, having to do with the reasons listed in the post. It’s not about the “1%” that you bring up – the 1% in the post is about the fact that the majority of people have to rely on an average only coming from ~1% of Yelp users, who actually regularly post reviews. Beyond this, there is the problem of positive social influence bias, which means reviewers are more prone to following positive reviews that have already been given with their own positive review, (and far more reluctant to follow a negative review with their own negative review) – leading to a herd mentality, and ~75% of reviews being between 3.5-4 stars. If this shockingly high % of restaurants have a 3.5-4 star review, something doesn’t add up, and this star rating ceases to be indicative of much. I disagree that Yelp is actually an average of average tastes, because the regular reviews only come from 1% of people. If one thinks about who this type of person is (i.e. the “regular Yelp reviewer”), I don’t think they represent the average person – the average person doesn’t spend time writing a review there, unless they’ve had an extremely poor or extremely good experience, perhaps prompting them to write a one-time review.

The example of UrbanDaddy simply has to do with the filtering interface shown in the post. Just as UrbanDaddy prompts users to input some additional criteria about themselves and their context, Yelp – or any other crowd-sourced review site such as a TripAdvisor etc – might benefit from adding this extra granularity in creating reviews, and for users in filtering to find reviews more relevant to them. For example, if I can select criteria that help define my taste when looking for reviews (such as “I’m a hipster”/”I love a quiet place”/etc etc), and filter through the reviews of State Bird Provisions and be matched with reviews by people who share one or more of those characteristics, I’m a lot better off than sifting through the 75% 3.5-4 star reviews, without any context or knowledge of these people’s standards relative to my own.

I think the selection of places by location, price range, a few pictures, rating and a very quick swipe through the review for something one would really hate goes a long way to have a pretty good experience with little investment in research. However, I agree that Yelp could provide a much better experience if there was a way of seeing ratings/reviews from people whose ratings are similar to mine. If I were in the shoes of Yelp’s management I would think long and hard about ways of getting people to give places stars to build a better understanding of the likes of its users.

I would argue that one of the reasons Yelp captured so much marketshare and became a verb (“Let’s yelp it”) is the simplicity of the search. I only need to enter place and a description (“restaurant” or “brunch”) and voila. I can filter by location, price and cuisine, and see the number of reviewers, which gives me a general idea of popularity within the Yelp community. I might not agree exactly with the reviewer profile but I know that a 4+ star restaurant will generally be good, and can then look at the restaurant website, menu, and maybe a couple comments to make my decision. The ease and accessibility of the site is what works really well for its network effects, and I think they took the wise route to make it so.